Fix a hitch running Stable Diffusion locally

Sep 15, 2022

conda

stable diffusion

ai

python

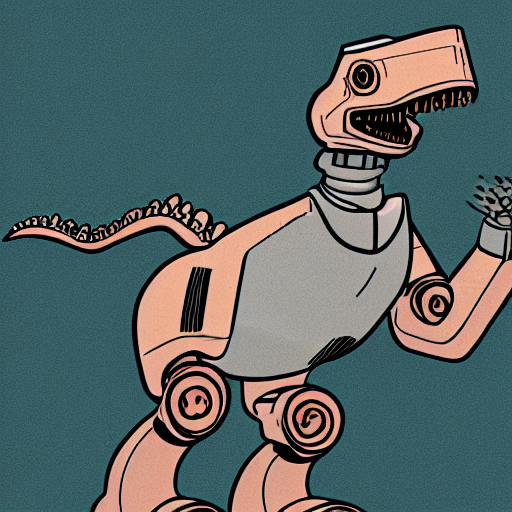

After a day spent cursing the EATX standards gods, offering up a few skinned-knuckle blood sacrifices, and the other appropriate motions in getting the second hand desktop computer with a GPU back up and running, I was able to spend this morning trying out Stable Diffusion running locally, which was the actual purpose of the exercise. Forthwith, the obligatory random AI generated image:

Prompt: 'cartoon of a robot dinosaur'

I followed an excellent step-by-step guide and on the whole it all proceeded smoothly, but with one gotcha.

Everything looked good until I got to the final step of actually trying to run the interactive prompt

with python scripts/dream.py - Step 5 in the linked guide.

Here it failed with a medium-sized stack trace that included the following error:

ImportError: cannot import name 'autocast' from 'torch'

I am not a Python guy. I don’t have quite the failure-to-disguise-my-horror with Python that I do with Node (and the rest of the benighted Javascript ecosystem), but I haven’t really got to grips with it either, so when things go wrong I’m generally in the “google + flail wildly” problem solving mode.

Googling turfed up a Reddit discussion for the same issue where the problem seems to arise from pre-existing Python packages instealled previously. Since I was previously using this machine for some video smoothing/upscaling it fits the circumstances, but it didn’t exactly address how to solve the problem. However, combining that info with a discussion in the Conda issue tracker I could find and set the appropriate environment variable to ignore the prior installed packages:

Edit: Seems I had actually just missed the appropriate comment as it’s there and was posted 8 days ago! /me shakes fist at the Reddit UI... Oh well, hopefully this post is still useful to someone even if it’s just future me!

export PYTHONNOUSERSITE=1

In my case I also appended that to my .bashrc script to ensure it’s always set.

In addition it was necessary for me to re-create the Conda environment as the previously created one was in a bad state. Presumably had dependencies on the now-not-available prior-installed stuff?

Running the following amended command from Step 4 of the step-by-step guide got things into a good state:

conda env create --force -f environment.yaml

I could then start a new shell, run conda activate ldm to activate the Conda environment, and then start the

command line with python scripts/dream.py - note that I did not need to re-run the python scripts/preload_models.py command.

It’s all up and running now, and it’s a lot of fun to play with. Running on my not-verly-beefy i5 based machine with a single NVIDIA GeForce GTX 1080 Ti card (11GiB of memory), running with the default settings, it takes about 15 seconds to spit out a single image.